The Intel 8086 Gets ICE’d

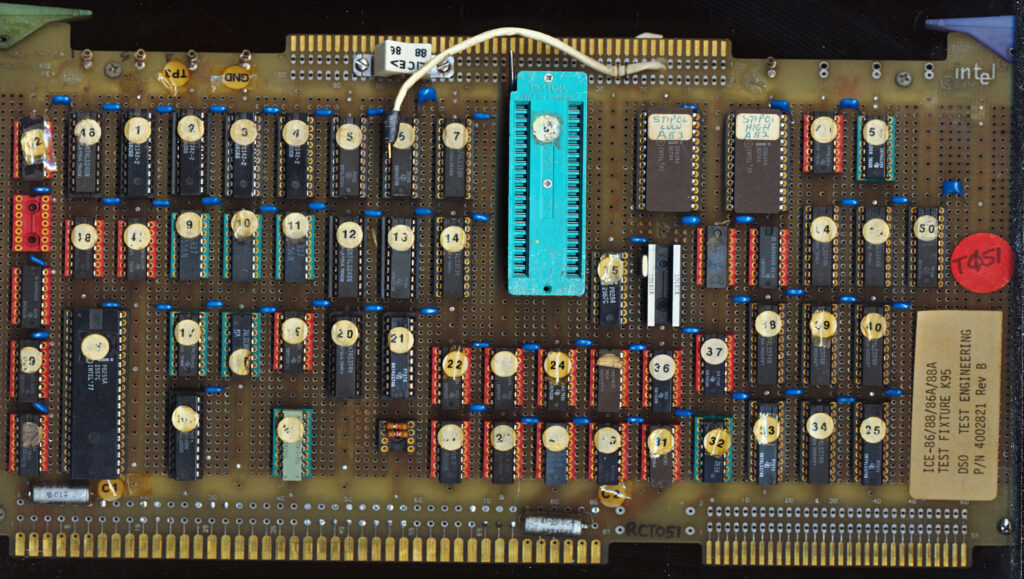

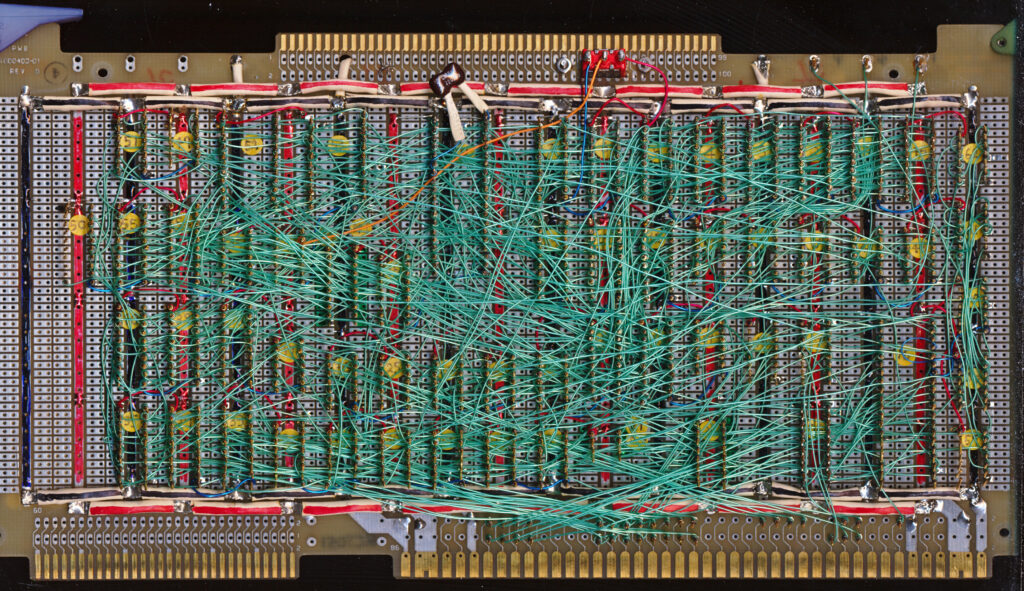

A while back I received this rather unusual board. Made in 1979 it was clearly a prototype, being a completely handmade wire wrapped board made ona standard Intel MULTIBUS breadboard from 1974. No CPU was present, but a 3M TEXTOOL socket for a CPU is. The paper sticker on the board reads ICE-86/86A/88/88A TEST FIXTURE K95 and DSO TEST ENGINEERING.

The ICE-86 (and ICE-86A/ICE-88/88A) were all MULTIBUS In circuit Emulators Intel made for the iAPX86 processors in 1979-1985 or so. These were 3 board sets, with a emulator pod (containing a 808x processor) meant for developing and testing x86 software and hardware designs. The boards would plug into a Intel MDS or MDS2 system (or Intel Intellec) and with supporting software, formed the basic of much of the original x86 hardware/software design of the era. I assumed this board was part of that set, but alas, while researching it I got ICE’d.

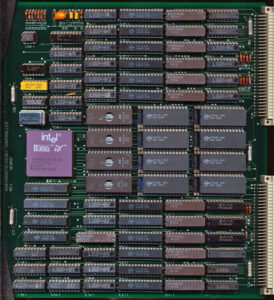

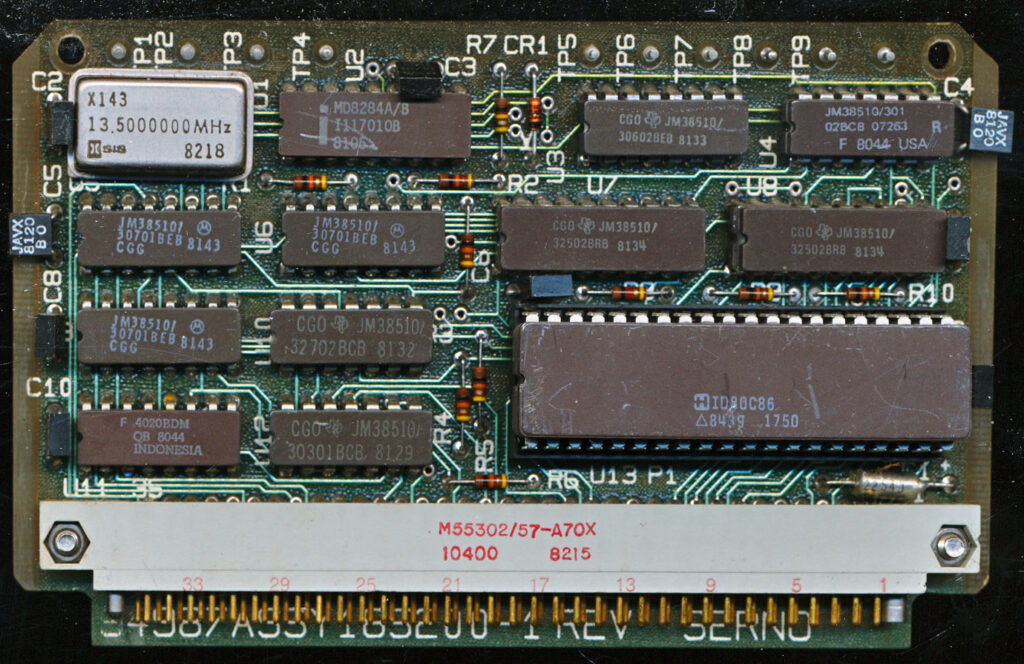

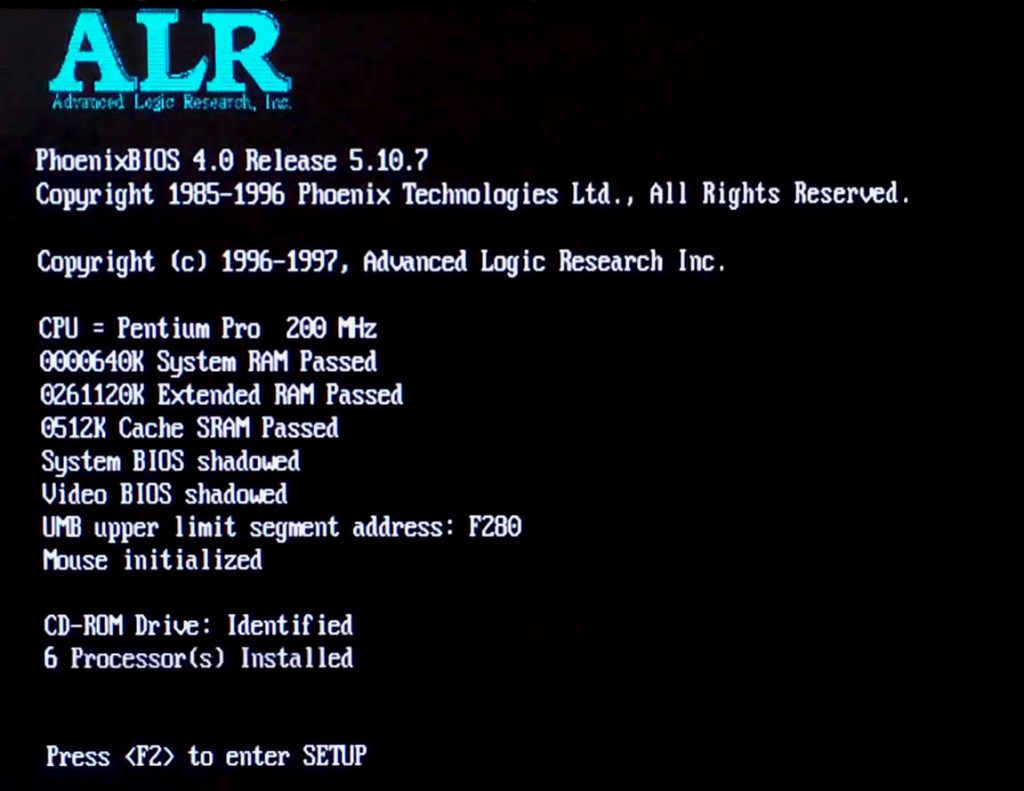

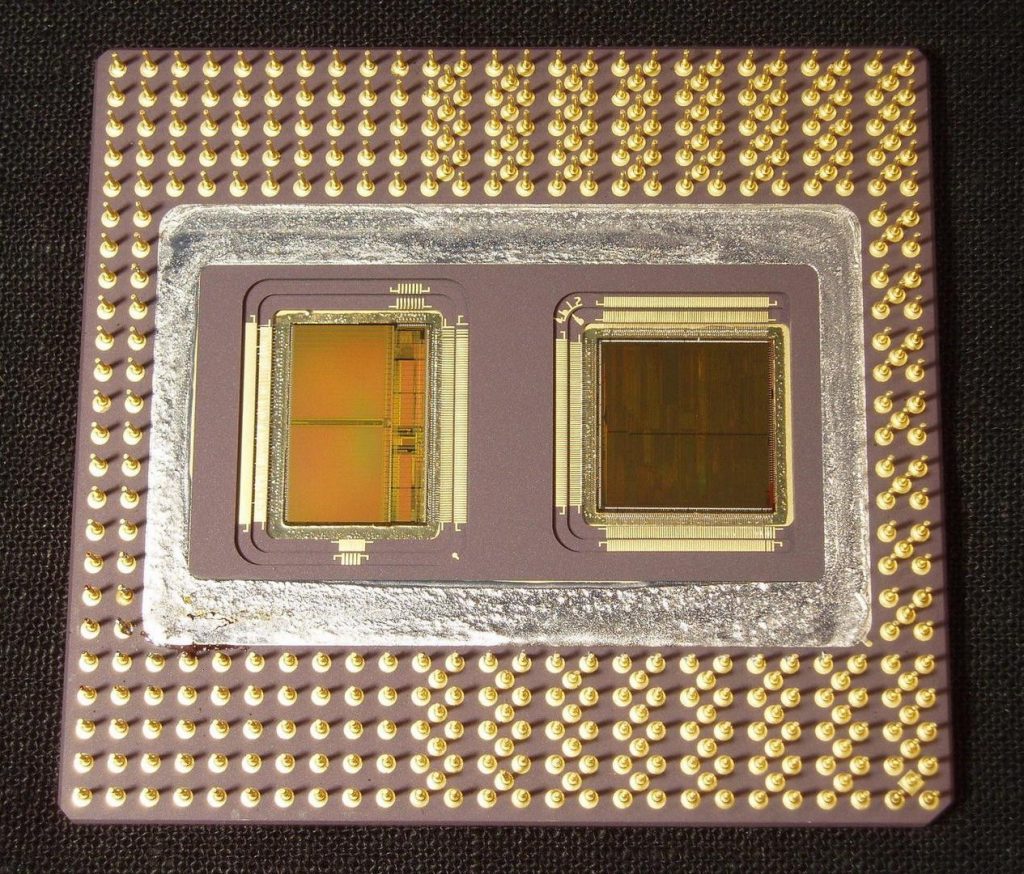

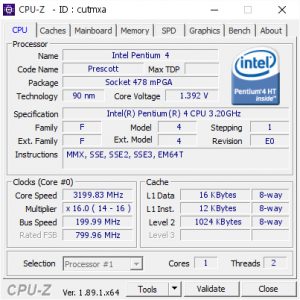

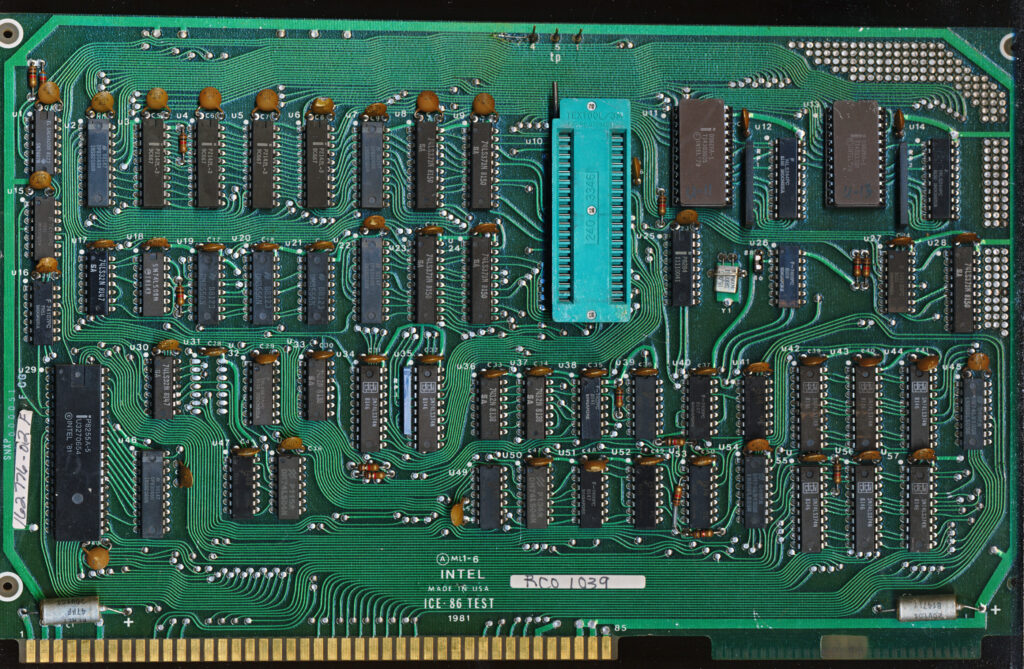

The ICE-8x systems are based on a Intel 8080A processor, so I checked the pinout on the socket on the prototype, VCC/GND did not match that of an 8080A CPU, it DID match that of a 8086. Furthermore the clock generator on the board is a P8284, thats the clock generator for the 8086/88 processor, taking the 15MHz crystal input, and outputting a 5MHz clock. The 8080A processor of the ICE-86 emulator system uses a 8224 clock generator (which is a divide by 9 clock generator, usually running on a 9-10MHz or 18-19MHz Crystal). To make matters more interesting I also have a couple later board (1982 production) which are clearly production (likely limited as the part numbers are still hand written) of the prototype. They are labeled as ICE-86 TEST – 1981.

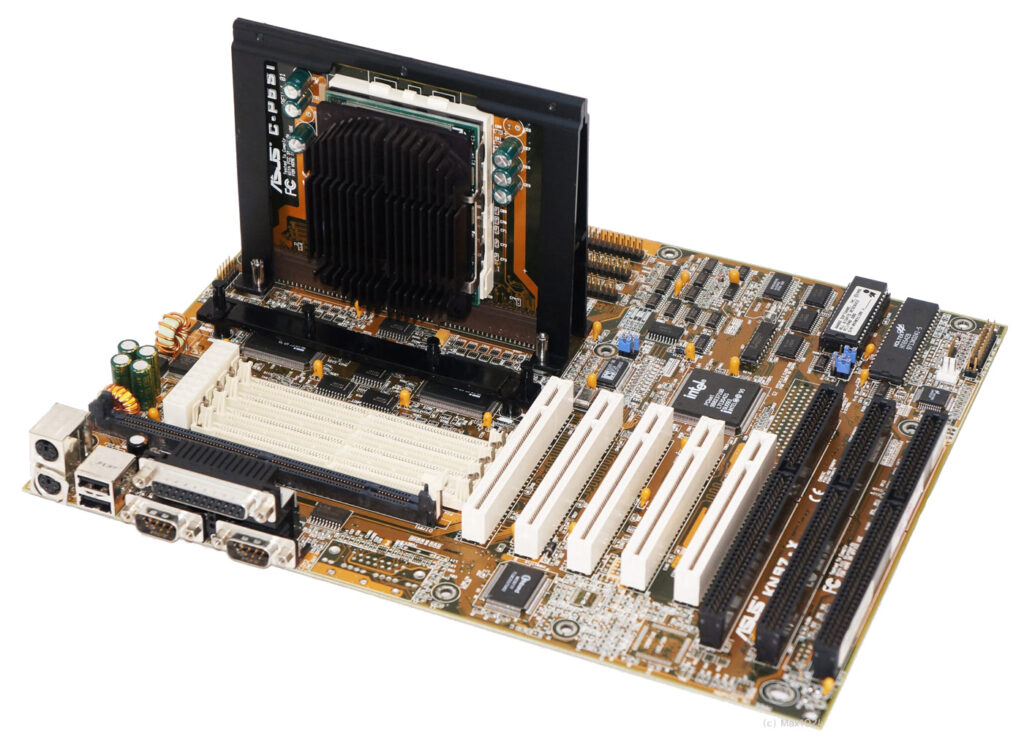

Production version of the ICE-86 TEST made in early 1982. Curiously this is a MULTIBUS board but about an inch (2.5cm) taller than standard. This was probably not meant to remain in a host system for long.

The prototype has a switch on it labeled ‘ICE’ for switching the board from 8086 mode to 8088 mode, while the production board lacks such a switch (its designed solely for 8086 processors). The prototype has a pair of D3604A 4k (512×8) PROMs, the production version is running a pair of 3628A 8k versions,m which were not available when the prototype was made. So what then would the purpose of such a board labeled ICE, that well, isn’t an ICE?

These board’s were designed for testing ICE emulators, and eventually giving end users the ability to test their software on a known working 8086/88 system. Generally when using an emulator, you would plug the probe into the processor socket on the target system you are developing and the emulator system allows you to set breakpoints, check register values, memory, etc. These test boards would allow you to develop at least basic software WITHOUT having a target system of your own, as well as to be able to offer an in system test of the entire ICE emulation. The production boards being labeled ‘ICE 86 TEST’ seem to be just this, how to ensure the proper function of the by then, thousands of ICE-86/88 board sets now in use. There was very likely a separate board for testing the ICE-88/A systems as well. Plug the tester into a MULTIBUS slot on the host system, plug the probe cable into the ZIF socket, and run the testing software. The ROM’s on the proto board are labeled ‘STIPOL’ which is cryptic at best, but onc of their purposes would likely to be to provide STImulus of somesort to the ICE emulator being tested.

The test boards would also give developers either peace of mind or headaches, when designing for the x86, is the problem the emulator not working? or is their a bug in my design? Now I need to find boards from an actual ICE-86 system.

Posted in:

Boards and Systems