Saturn-V

The launch by the Soviet Union of the first artificial satellite of the Earth and the first man into space was a real shock for the United States, raising the question in the eyes of the whole world whether the United States is really the leader of world technological progress. Moreover, not only American elites, but also ordinary citizens felt deeply wounded. A month and a half after Gagarin’s flight, US President John F. Kennedy announced the launch of an ambitious lunar space program. On September 12, 1961, Kennedy addressed the nation, calling for a manned mission to the Moon at any cost.

This is actually the first and main reason for the Americans’ success – their lunar mission program became a single “national impulse,” for which no effort or money was spared. At the same time, the Soviet Union accepted the challenge posed by the Americans rather “out of inertia,” as a response to their lunar program. Moreover, by that time the USSR had proved what it wanted, and there was no urgent need to be the first on the Moon anymore. Initially, the Moon landing was not part of the Soviet space program. In the late ’60s and early ’70s it was planned to build a large orbital station and a spacecraft for an expedition to Mars and Venus. Officially, the Soviet lunar program did not begin until 1964.

The key point of the lunar mission program was the development of a super-heavy launch vehicle capable of accelerating a craft weighing several tens of tons to the second cosmic velocity (aka escape velocity). In the U.S. NASA began to create a family of rockets known as Saturn. Although unofficially, NASA began to think about the Moon in 1960, even before Kennedy’s speech, and were working on various options for heavy launchers. The name “Saturn V” hints that the launch vehicle was the fifth model in the family. There were other options, even heavier than Saturn V, with some being planned for a Mars mission as well.

The question was who would build it. The chief architect of the Saturn V, Werner von Braun, chose the division of labor. This allowed him to choose the best of the best in the whole industry. He was able to use the most experienced people from each of the companies. For contractors, the decision meant big orders, not a huge order for any one. In the end, the main share was distributed among three companies: Boeing, North American Aviation and Douglas. All in all, more than 20,000 contractors and subcontractors were involved in the production of the rocket.

The first launch of the three-stage Saturn V rocket took place on November 9, 1967 and showed its amazing capabilities. The 3rd stage with the Apollo unmanned spacecraft and the lunar module mass-dimension model with a total mass of 126 tons entered orbit. And thanks to the sixth launch, humanity took a “giant step” toward the first landing of astronauts on the Moon. On July 21, 1969, astronauts Neil Armstrong and Edwin Aldrin made their first ever landfall on the surface of a natural Earth satellite. The Saturn V rocket still amazes today with its grandiosity. With a launch weight of 3,000 tons, it has a height of 110 meters, making it the tallest rocket in the history of world astronautics.

N1 Business end

What about the USSR? In the Soviet Union, work on N1- a superheavy launch vehicle, began back in 1958. As noted above, it was originally created not to provide flights to the Moon, but to build an orbital station, as well as to launch interplanetary expeditionary spacecraft modules into orbit. Later, however, a belated decision was made to include the USSR in the “lunar race” with the delivery of a man to the surface of the Moon. Thus, the N1 rocket program was accelerated. Before determining the final scheme of the launch vehicle, the creators had to evaluate at least 60 different options. They decided on a scheme with spherical tanks for fuel and oxidizer, as well as a load-bearing outer shell, which was supported by a power set and circular placement of the rocket engines in each of the stages. The First stage alone had 30 engines, which is a plumbing nightmare (as SpaceX is working out with their Starship launch system)

The stages of the N1 rocket were connected to each other by special transition trusses. The rocket complex, which included the launch vehicle H1 and the lunar system to send to the lunar surface with the subsequent return to Earth of a crew of two (the moon landing involved one person) was designated as N1-L3.

The N1 was never static fired, this led to issues being discovered at launch that would have been easier to catch without destruction if it had been

Getting ahead of myself, I will say that none of the launches of the N1 heavy launcher were successful.

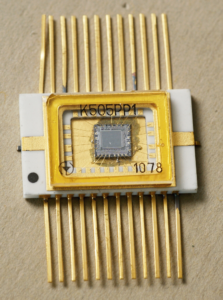

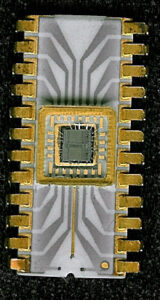

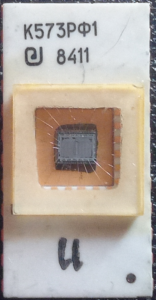

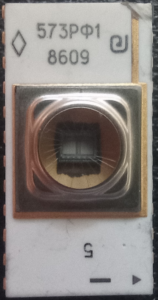

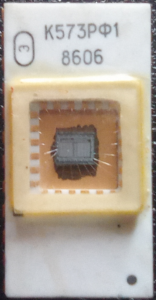

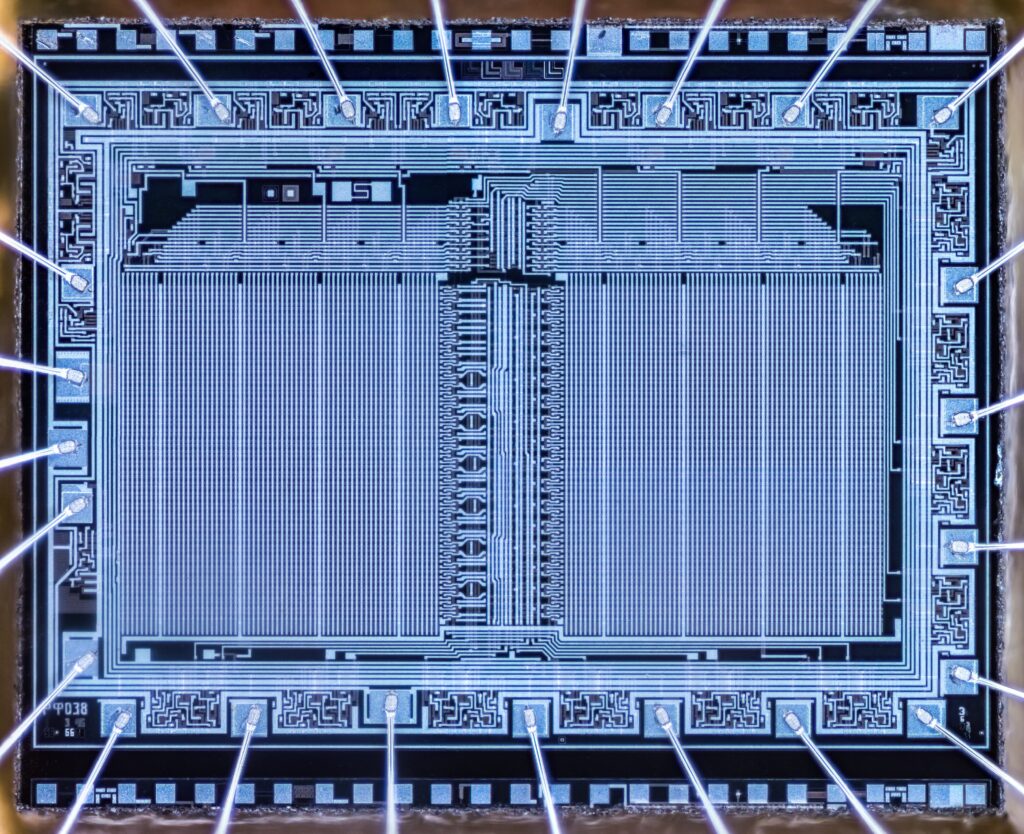

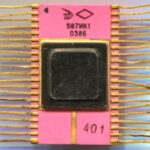

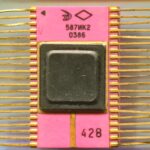

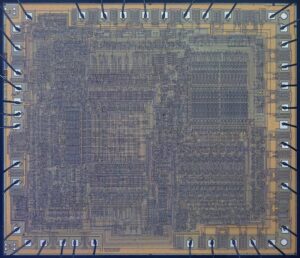

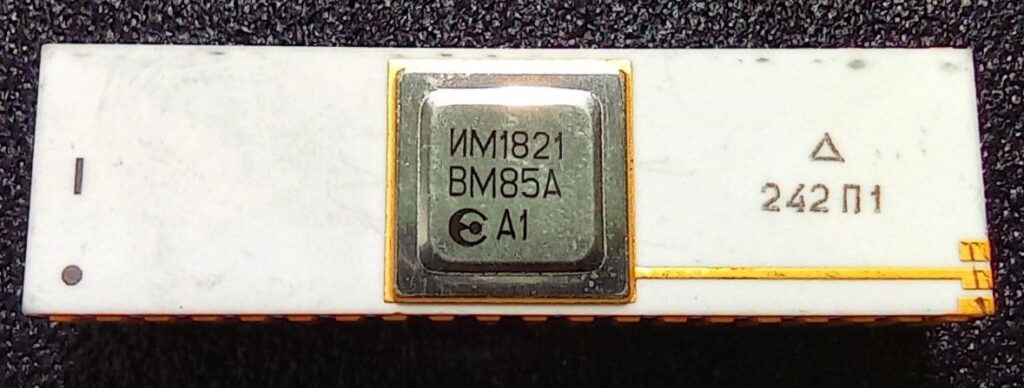

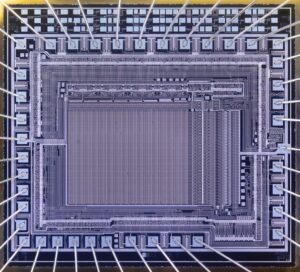

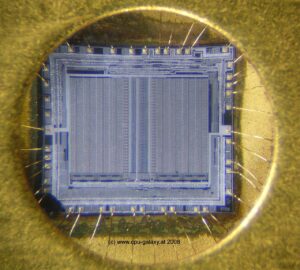

Onboard computer “Argon-11S”.

While the designers were trying to correct the mistakes made in the design of the N1 rocket, the technique of flights to the Moon with a return to Earth after a ballistic flight around the natural satellite of the Earth was being worked out. There have been launches of the Zond spacecraft series using the Proton launch vehicle. These spacecraft were an unmanned version of the two-seater manned spacecraft. All work was carried out in conditions of high secrecy. But now we can say for sure that the Zond-6 and Zond-7 spacecraft were controlled by an onboard digital computer. The Argon-11S.

It consisted of three functionally autonomous computing devices with independent inputs and outputs, interconnected by channels for information exchange and synchronization.

Fixed-point representation. Data bus -14 bit, commands – 17 bit. Number of commands – 15.

Execution time of operations (µs): addition – 30, multiplication – 160.

RAM capacity was 128 14-bit words, ROM capacity – 4096 17-bit words.

Weight 34 kg

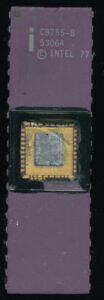

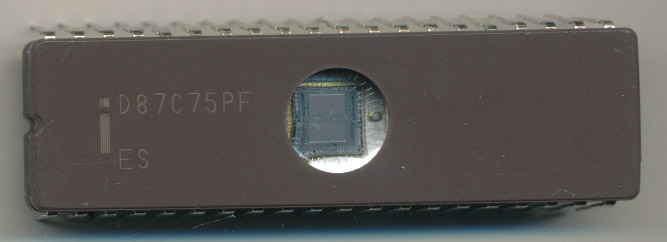

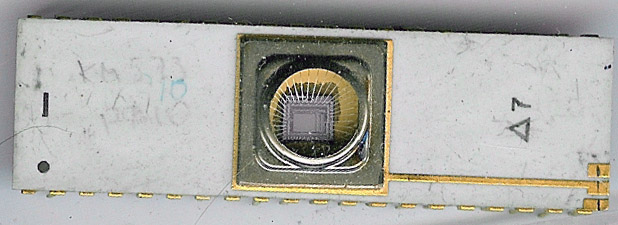

Element base: integrated hybrid microcircuits “Tropa-1”. The microcircuits of small integration degree are made on thick films and form a system of logic elements with direct links (OR, OR – NOT, AND – NOT, etc.). Microcircuits are made in the square metal-polymer case of size 11,6 x 11,6×4 mm, weight of microcircuits is not more than 1,5 g.

Element base: integrated hybrid microcircuits “Tropa-1”. The microcircuits of small integration degree are made on thick films and form a system of logic elements with direct links (OR, OR – NOT, AND – NOT, etc.). Microcircuits are made in the square metal-polymer case of size 11,6 x 11,6×4 mm, weight of microcircuits is not more than 1,5 g.

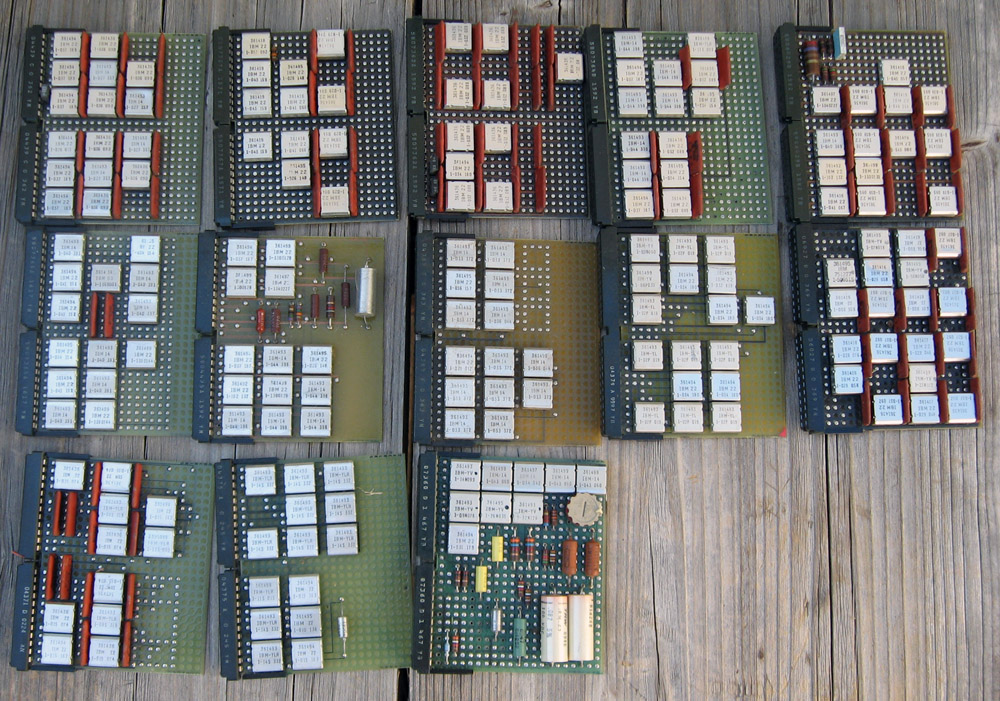

These were very similar to IBM’s SLT architecture for the System/360 mainframes in the USA at that time period as well.

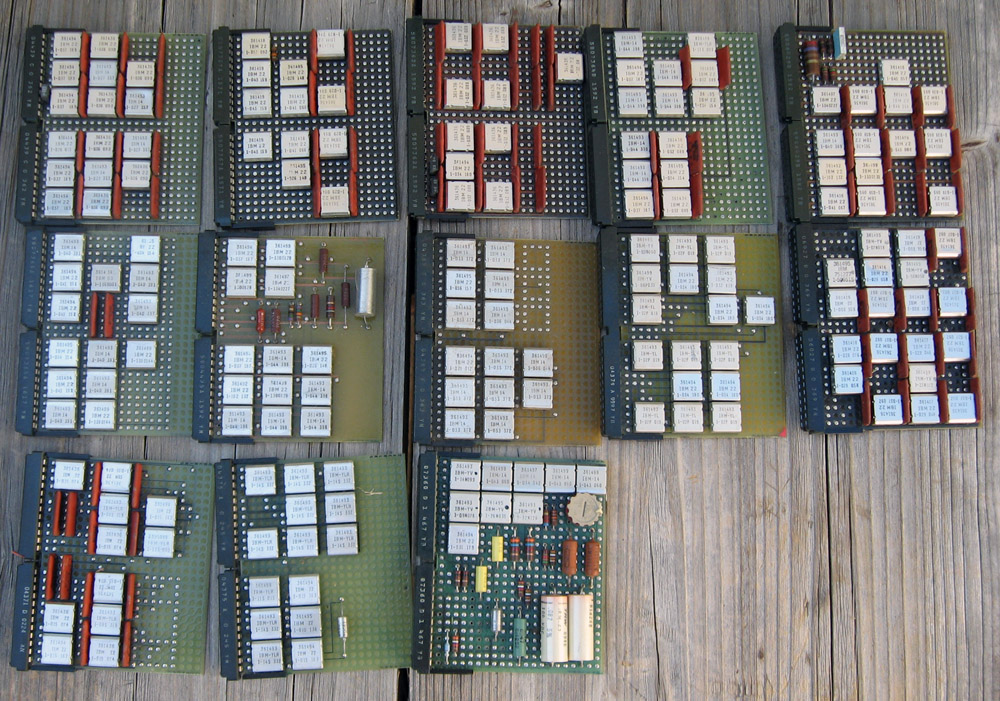

Historically, these were the first integrated circuits developed in the Soviet Union. The active elements were shell-less transistors. The first prototypes of the series were named 1MD1-1MD6, 1MM1-1MM3. Hundreds of logic circuits were mounted on printed circuit boards and bundled together like a book.

Structurally, Argon-11S consisted of three identical functional blocks operating in parallel and independently of each other. The inputs of each block received exactly the same information from many telemetry sensors. On its basis, each block produced more than forty control actions. For the first time in the practice of creating onboard computers, a node redundancy scheme was applied. The final control actions were formed according to the majority principle. That is, if they were the same on two of the three outputs and different on the third, the values generated by the majority were taken as the basis. In fact, Argon- 11S was constantly voting for the most correct control action.

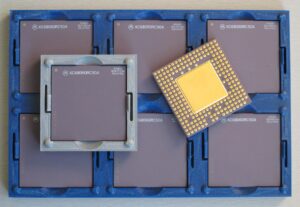

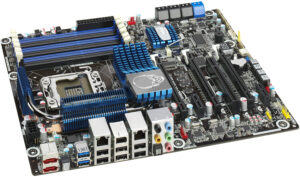

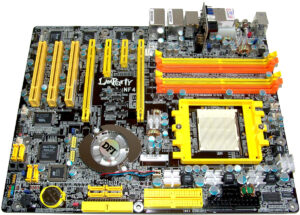

Various IBM System/360 cards showing similar technology at the Soviet Tropa series.

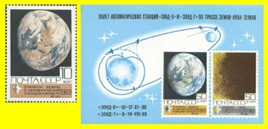

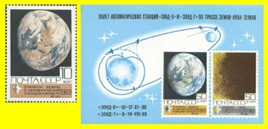

Zond-6 was the ninth launch of a prototype spacecraft. The purpose of the mission was an unmanned flight and photography of the Moon, the return of the lander to Earth with a landing in a given area, as well as practicing the functioning of the manned spacecraft in automatic version. Unfortunately, due to malfunction of the parachute ejection equipment, the vehicle crashed. After the failure of the Zond-6 return to Earth, Zond-7 was launched to the Moon on August 8, 1969. The day after the launch, the spacecraft maneuvered on an intermediate trajectory and obtained color photographs of the Earth. On August 11, the spacecraft flew around the Moon at an altitude of 1,985 km and conducted two sessions of photographs of the Moon and Earth. Zond-7 returned to Earth on August 14.

After a normal dive into the atmosphere, it landed successfully. The photograph taken by Zond-7 is less well-known than the Blue Marble taken by the crew of the Apollo 17 spacecraft, but it is no less beautiful.

This photo ended up on the mail block dedicated to the flights of these spacecraft.

It must be said that not everything was smooth with Zond-7. Prior to this success, there were three high-profile failures. Three rockets (two of them N1 and one Proton) with similar spacecraft exploded on the launch pads.

Despite the fact that none of the N1 rockets managed to complete the launch program, the designers continued to work on it. The next, fifth launch was scheduled for August 1974, but did not take place. In May 1974, the Soviet lunar program was closed, and all work on the N1 ceased. Two rockets ready for launch were destroyed.

Antares 100 series flew 3 times with NK-33 engines, the 4th flight was unsuccessful and resulted in a large explosion.

Only 150 engines of the NK-33 (the successor of the original NK-15 for the first N1s) type manufactured for various stages of the rocket were saved from the N1. These engines had a chance to fly already in America. They were used in the first stage of the Antares 100 series launch vehicle of the Orbital Sciences Corporation (Now Northrup via Orbital ATK). They also continue to be used on the Russian Soyuz-2.1v rocket, with the latest launch in April of 2022, still using engines built in the 1960’s, albeit with more modern electronics.

Soyuz-2.1v

Having lost the “Moon Race”, the Soviet Union concentrated its efforts on other projects that had a less prestigious, but no less important role in space exploration – orbital stations, as well as a fairly successful science campaign exploring Venus. Hopefully in the next few years we will be able to see another truly massive rocket, with 33 engines on the first stage, launch, heading to the Moon, Mars, and beyond

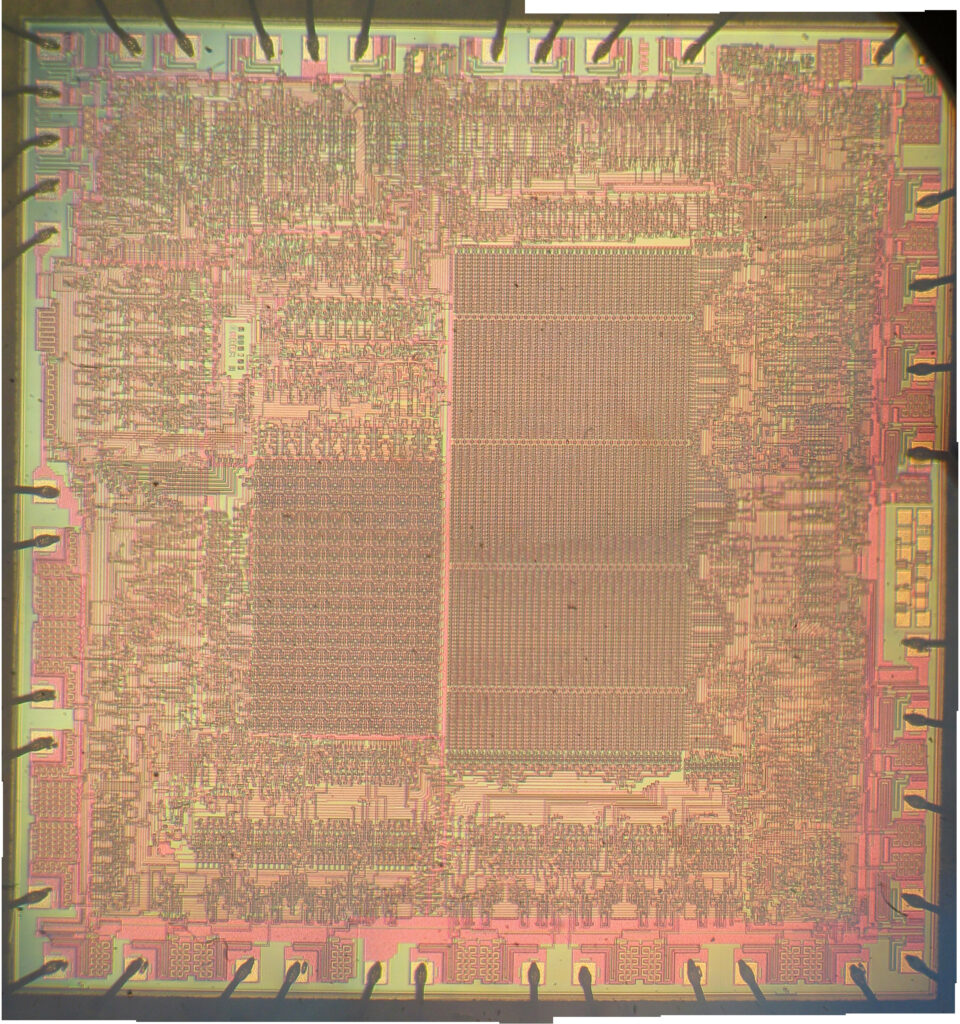

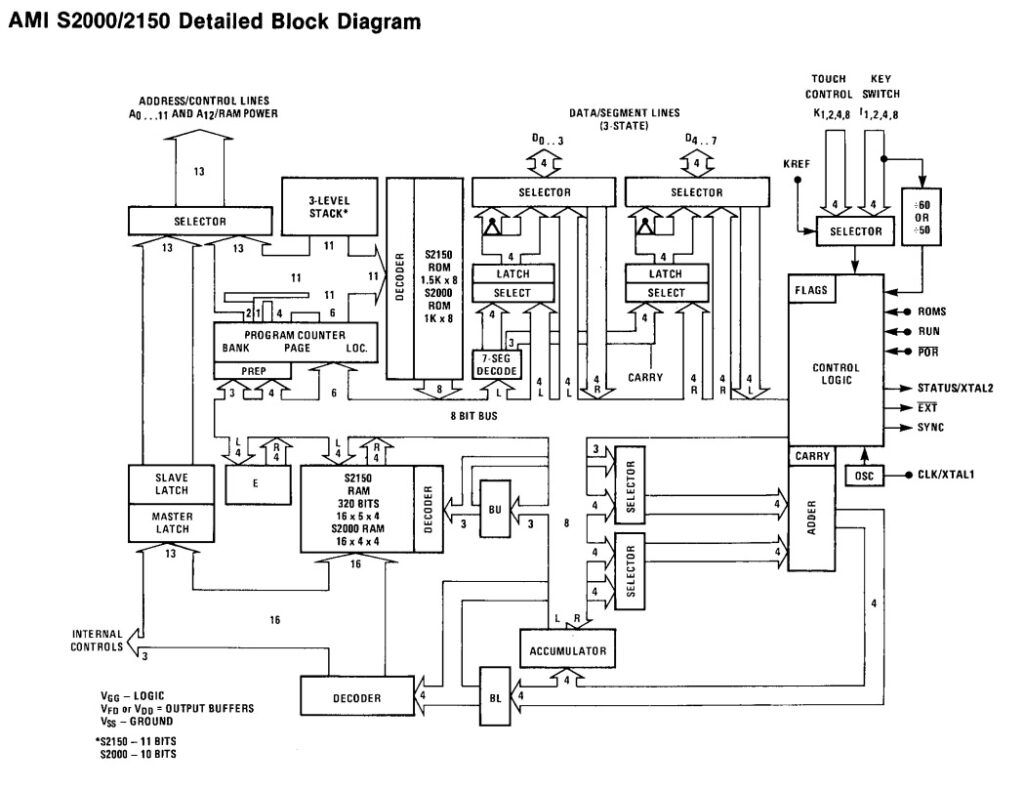

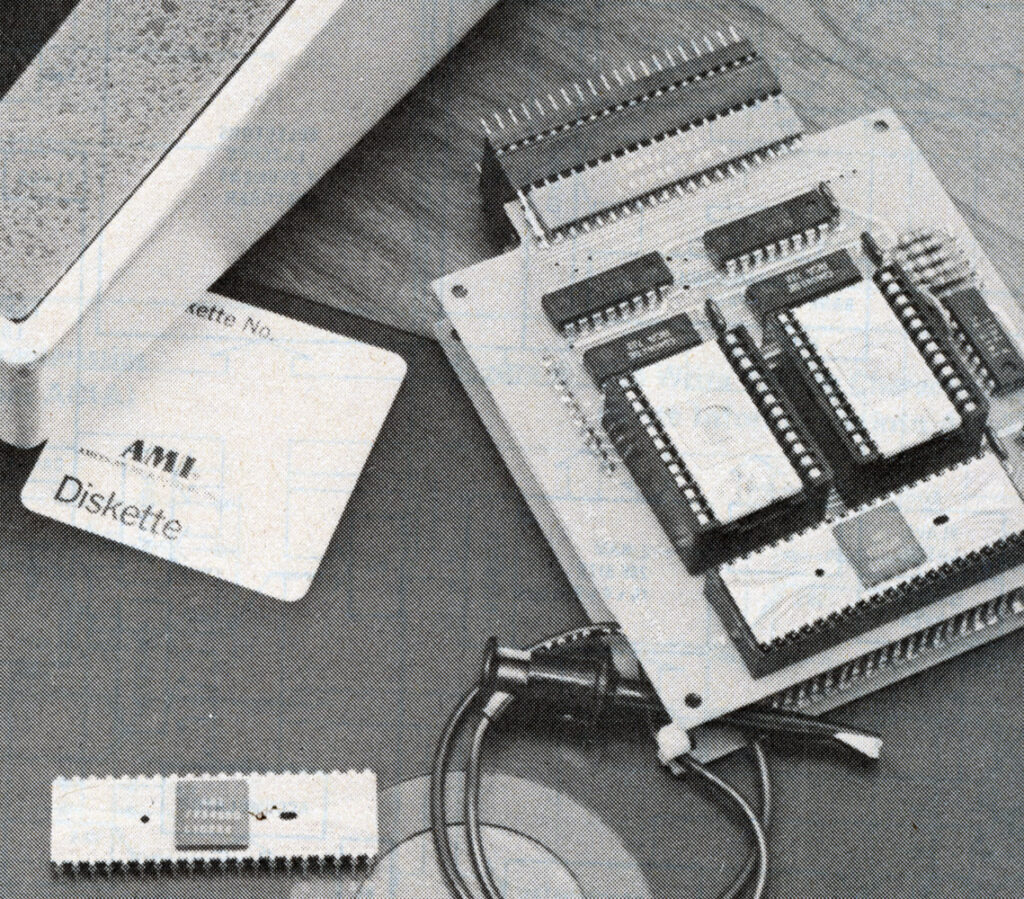

The goal was to co-develop a basic control oriented processor, something that could run basic machines and industrial automation type stuff, toaster oven, games, etc. It wasn’t meant to be a general purpose computer type processor cranking out spreadsheet formula results. In many cases the design was to fill the same role as the National Semiconductor COPS400 line. Iskra hoped to eventually manufacture the processors in Yugoslovia with technology and equipment from AMI, but Yugoslavia and the United States were in a bit of a weird spot in the 1970’s so getting export licenses for fab equipment never happened. Yugoslavia was rather independent of the Soviet Union (due to the Stalin-Tito rift) which afforded them access to the US that other communist countries of the time didn’t have, but they were still nominally communist. One has to wonder how hard AMI tried to get such licenses though.

The goal was to co-develop a basic control oriented processor, something that could run basic machines and industrial automation type stuff, toaster oven, games, etc. It wasn’t meant to be a general purpose computer type processor cranking out spreadsheet formula results. In many cases the design was to fill the same role as the National Semiconductor COPS400 line. Iskra hoped to eventually manufacture the processors in Yugoslovia with technology and equipment from AMI, but Yugoslavia and the United States were in a bit of a weird spot in the 1970’s so getting export licenses for fab equipment never happened. Yugoslavia was rather independent of the Soviet Union (due to the Stalin-Tito rift) which afforded them access to the US that other communist countries of the time didn’t have, but they were still nominally communist. One has to wonder how hard AMI tried to get such licenses though.