The 8-bit Legacy: Why They will never die

This topic comes up a lot. Why are old CPU designs still used? and used a lot. Every few years a company will make a statement about their new 32bit or 16bit CPU/MCU design that makes ‘migration from an 8-bit design easy’ or seeks to replace 8-bit microcontrollers entirely. It does not happen, and will not for decades.

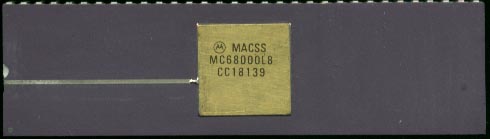

8-bit processors first debuted in 1972 with the Intel 8008, so they are pushing 40 years. What many don’t realize is that 32bit processors debuted in 1979 (Motorola 68k and National Semiconductor 32k). So 32 bit is nothing new, and the same reason applies today as it did 30 years ago. Why use a Ferrari when a Chevy will do? Most designs simply don’t need that power, or complexity, 8-bits is MORE then enough to run a toaster oven, a bread machine, or your TV remote.

Embedded.com had an article talking about this issue just last week. So why do companies say that their design will replace 8-bit? It’s good PR, it gets people talking about their new processor, and thats not a bad thing.